Last year, I sat in front of my laptop and wrote down some thought about AI, its charm and what is changing in our industry and not only and what we should be careful or thoughtful of. At the time, we were still swimming in the initial stream of adoption, trying to discern whether we were facing a simple tool or a fundamental restructuring of our professional lives.

People spoke in futures. What AI would change. What it might automate. What it could do to our work, and in our personal lives. I argued for productive skepticism, a habit of questioning and verifying outputs before acting on them, as the highest sign of human intelligence in a world of probabilistic models.

A year has passed. In the timeline of technology, twelve months is an era. AI is no longer a distant industrial process; it is pervasive. It is in the room. It lives in our IDEs, our pull requests, and the rough drafts of our ADRs.

We are no longer asking, at least not primarily, whether AI will affect engineering work. It already does. The more interesting question now is: what kind of relationship are we building with it?

The AI Dev Community: A Space for Engineers to Own the Narrative

Early this year, we founded at Bikeleasing an internal AI Dev community within our tech department. While some other very talented colleagues, I have the pleasure to work with, launched initiatives to explore general use cases, risks and roadmaps, from n8n workflows to internal data-privacy compliant GPTs for the broader company, our tech community remained niche, focused on the specific realm of the development lifecycle.

The goal is simple but important. We wanted a space where engineers could share experiences and brainstorm how to guide the impact of AI on our culture. I launched the initative and become member of community not as top-down mandate, but as recognition that leadership must provide direction over prohibition. We didn’t want to just choose and use the tools; we wanted to own the narrative of how they used us.

The Cognitive Margins: Where the Value Lives

The early conversation around AI focused almost exclusively on code generation. The demos were seductive: a feature built in minutes, an entire API scaffolded in seconds. It was easy to conclude that the value of AI was simply writing more code, faster - much faster.

We discovered that this narrative often misses the point, at least for us. The greatest benefits do not come from the machine writing the code. In fact, many of us still choose to write much of our code manually.

The real transformation has happened in the cognitive margins.

Documentation is the most visible shift. Many teams know what should be documented, but they fail because time is not there, or the task is felt boring or the cognitive load of translating code into documentation is real and hey, everyone knows it gets outdated very quickly. AI lowers that barrier. In my own CLAUDE.md files, I maintain a strict rule:

treat stale documentation as a bug. Never claim to have completed a task until the documentation is updated.

AI helps turn tacit, local knowledge into written, shareable thinking. This sounds small until you realize how many expensive problems in engineering are simply failures in communication and knowledge transfer.

Then there is the adherence to “invisible” guidelines. Some of the most important engineering norms (architectural boundaries, naming discipline, error-handling conventions, code organization) are difficult to encode in unit or integration tests. They live in the patterns between the lines or in a separate tool under “technical guidelines”. AI is surprisingly good at spotting deviations and helping teams notice where the codebase is drifting away from its own standards.

And perhaps most practically of all, it has been useful in supporting ADR processes and exploratory design. A prototype that once took days to validate can now often be stood up in hours. Not because AI has magically solved software engineering, but because it makes the first pass much cheaper. It allows you to test whether an idea is merely attractive in the abstract or resilient enough to survive contact with implementation.

The Boldness of the Augmented Engineer

This shift in the cost of analysis has led to a newfound boldness. Since we adopted Claude and similar high context models, the number of releases per week has increased. But the volume is not the most interesting or biggest jump in a metric. What matters is the nature of the changes themselves.

I have noticed a newfound boldness in our engineers. It is not simply that people type less. Nor that AI writes entire systems while we sip espresso and nod philosophically at the screen. The deeper shift is cognitive. People are now changing things that previously would have felt like too much effort to analyze or too risky to touch. When you have a support system that can help you map the dependencies of a complex system in seconds, the psychological barrier to refactoring drops.

This ease of cognitive switching is vital. At our current scale (seven cross-functional teams and interactions with at least 2 external teams) the ability to jump between repositories without losing the thread is a major asset. We are no longer limited by how much code we can hold in our short-term memory. We are supported by a teammate (the “junior under drugs” as we sometimes call Claude) that never sleeps and has read every line of the repo.

Yet, speed without some principle or structure is just a faster way to reach a disaster. As the barrier to change drops, the questions of responsibility and ownership become more urgent.

The AI Manifesto: Sovereignty in the Diff

To ground this speed, my team drafted a set of AI principles. I cannot underpraise the team for this artifact; it is not a set of bureaucratic hurdles, but a clear articulation of what it means to be a professional in 2026.

The manifesto introduces a couple of very clever principles, among them the Junior Partner Rule:

We treat AI output like the work of a brilliant but overconfident intern: assume it is 80% correct and 100% in need of a peer review.

This mindset shifts the engineer from a passive consumer to an Editor-in-Chief.

We also emphasize that Context is King.

A general model is a librarian, but an AI with deep codebase awareness is a teammate. By being surgical with context ( referencing specific files rather than dumping the whole repo) we improve the precision of the results.

And then there is the distinction I wish more teams made explicit: “prototype with vibes, deliver with engineering”.

That, too, feels exactly right to me. Vibe-coding has its place. Exploration matters. Discovery matters. Fast experiments matter. But production systems deserve discipline, readability, explicit error handling, tests, review, and security checks. A prototype is a learning artifact, not a moral loophole.

But the most important section, and the one that defines our culture, is the first principle:

The Human Commits: AI may suggest the lines, but you own the diff. Never commit what you cannot explain or defend in a postmortem.

Fifteen words. No tool list, no approved-vendor matrix, no flowchart, no decision tree. Just a bar set at the right place: the moment of accountability.

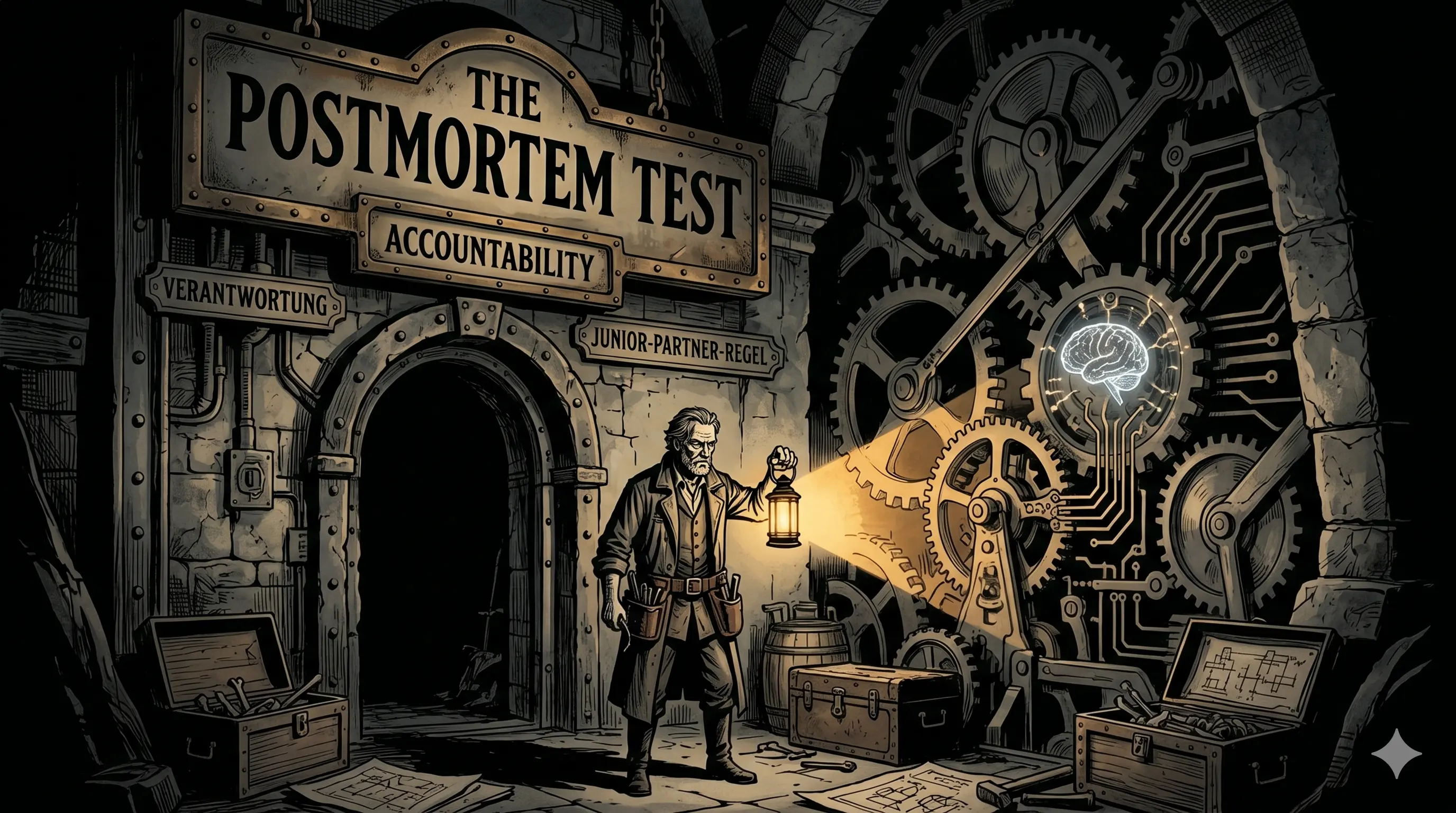

The Postmortem Test

This principle is elegantly simple. It bypasses the need for a thousand-page policy manual by appealing directly to the engineer’s sense of craft. If you are reviewing an AI suggestion, you must ask yourself two questions:

- Can I explain why every non-trivial decision was made here?

- If this causes an incident at 2 AM, can I debug it without re-reading it from scratch?

If the answer to either is “no,” you are not finished with the review. You have merely finished reading.

Accountability does not transfer to the tool. As recent research on the pervasive integration of AI across society highlights, there is a growing concern regarding accountability, particularly in the event of incidents involving AI-enabled systems. This structural accountability gap is precisely what our manifesto seeks to close. There is a classic IBM maxim that a computer can never be held accountable, and therefore must never make a management decision. The Postmortem Test is our humble way of operationalizing that principle for the modern stack. If a developer merges a 400-line PR because the tests passed, but cannot explain the logic during a failure three weeks later, the failure is human.

This is the price of our new speed. In exchange for boldness, we must maintain an even more rigorous standard of understanding. We must ensure that our foundation is designed, not just generated.

Accountability as a Force Multiplier

My manager and CTO, Michael Maretzke, gave me a piece of advice at the start of our AI journey:

Toni, let’s find a way to ship highly maintainable, high-quality code but faster. And notice the order of those adjectives; it isn’t casual.

The order matters because speed is a multiplier. It amplifies whatever was already there. A careless team can now create disasters faster; a thoughtful team can reduce uncertainty faster. The tool does not abolish culture. It reveals it.

This is why accountability is not a constraint on velocity. It is the condition of it.

What AI cannot provide is Fingerspitzengefühl (the fingertip feeling) I wrote about a year ago: the intuition that tells you when an abstraction is right or when a piece of code feels genuinely off. AI can help us build more, and faster, but it cannot provide the taste required to build something that remains maintainable for years.

I am no longer especially interested in whether AI writes better code than humans. I am more interested in what kinds of engineering become newly possible when the cost of analysis and context reconstruction drops so dramatically. The grey zone feels different to me now. It is less speculative and more operational. Less about predicting what AI will become, and more about noticing what it is already making of us.

Healthy adoption does not happen by accident. It requires communities, shared language, and colleagues willing to turn vague intuitions into durable norms. AI has made it easier to produce artifacts. But it has also made it more important to deserve them.

The principles at the heart of this article were drafted by the talented engineers who make up our AI Dev Community: Adam, Alperen, Matthias, Yaroslav, Rym, and Vladimir. Writing the manifesto was their work. I am grateful, and extremely proud, to think alongside them. Thanks also to all the colleagues at Bikeleasing across every team who contributed ideas, challenged our assumptions, and made this a living conversation.